This one is heavily grounded on what I suppose I master — simulation versus measurements, mostly in free field. Arrays of transducers on lines or screens are hyper-sensitive to their locations, phase, cancelling spots across frequency variations. But the thing was: I found a way to train a machine-learning module. The idea is simple and you will have fun understanding it.

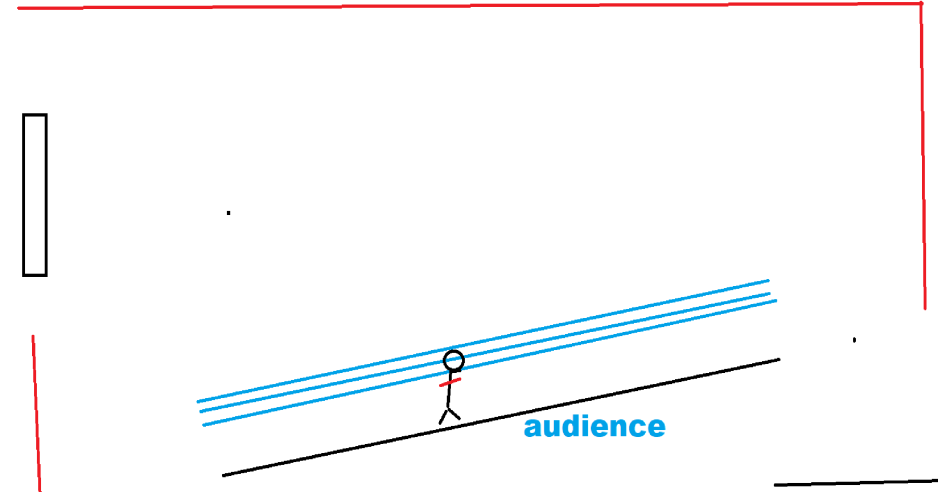

The governing picture — the blue lines for where we want sound, the red lines for where we do not.

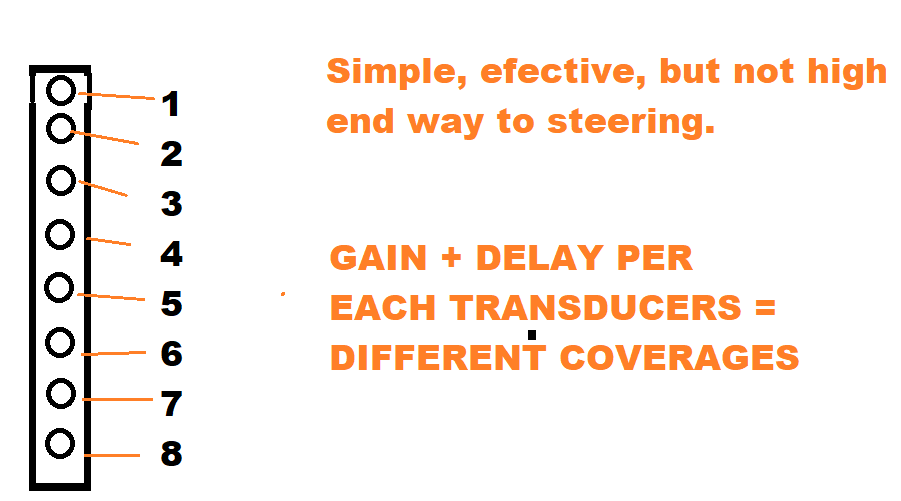

We already talked about the benefits of 2D on very early stages of research.

2D framework recap — enough to teach, cheap enough to compute.

Each frequency has different lobes for different coverage challenges. This is not a problem for a spreadsheet.— why ML enters the picture

But much more can be done with the superposition technique — maybe the holy grail of active muting of non-desired lobes (V2).

Thinking out of the box.

We have data. We have separation between sources, and places where we want sound and places where we don't.

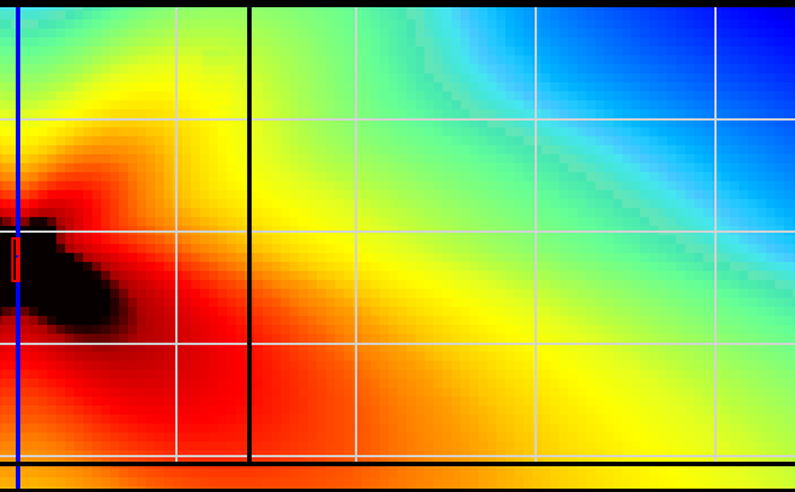

The upper image is the 1-impulse per source — natural and good response for some area. But there is an upper, crazy, powerful lobe that will take out intelligibility, or simply the "promised" focused sound.

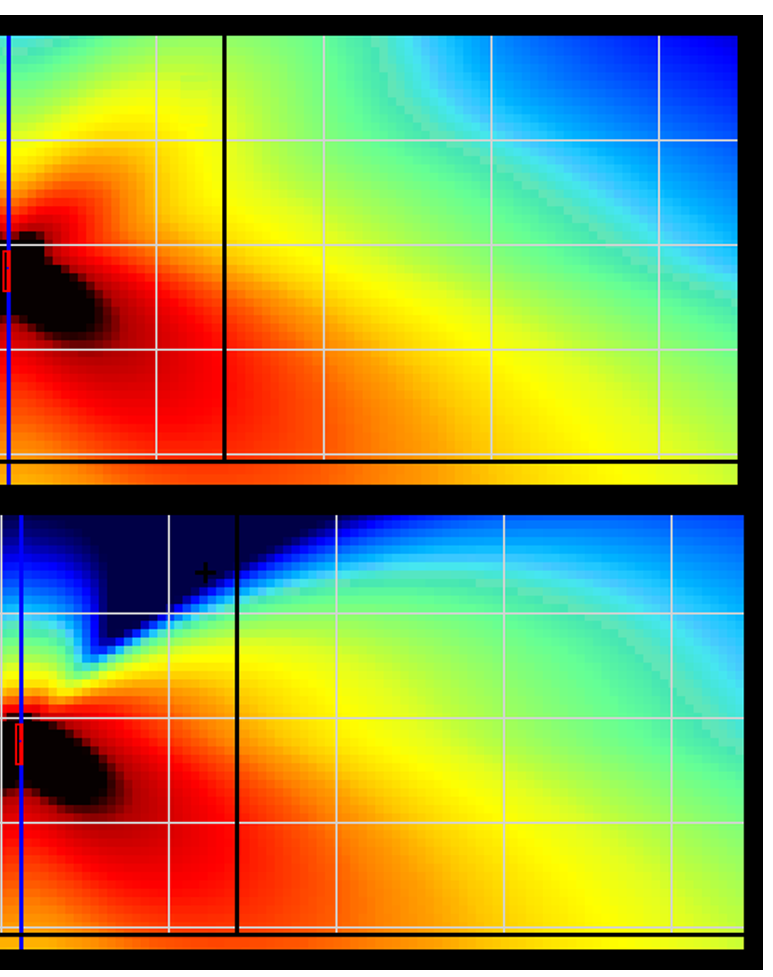

Now, the confidential — a tricky second impulse. And we got exactly this: transformation. Above, the original. Below, the magic.

This magic is just a second impulse — not made for covering a second area. It is made for muting the non-desired crazy roof lobe. For the effect to be effective, 1.5 dB were sacrificed. Efficient enough.

Next deliveries of the diaries I will show how to really get into this — which, still, is a fairly complex task, because each frequency has different lobes for different coverage challenges.

The legend says — with a FIR, you have magnitude and phase independency across the frequencies you can control.